Is Building Intelligent Systems the Key to Balancing AI and Agility?

Based on a conversation between Unito's co-founder Marc Boscher and Cirface’s CEO Marquis Murray, we explore the realities of building AI-powered intelligent systems. The key is to simplify first, build for change, prioritize distributed truth over a single source, and iterate on trust. Context is critical for AI agents. The most successful implementations will augment human judgment, not replace it.

Table of Contents

What Are Intelligent Systems and Why Do They Matter?

The term "intelligent systems" refers to computer systems that can learn, adapt, and make decisions based on data inputs, user interactions, and changing environments. These systems incorporate artificial intelligence techniques like machine learning, natural language processing, and knowledge representation to solve complex problems and automate cognitive tasks.

The key is to start by removing complexity:

What can we eliminate from a poor or overly complex system?

Where are things falling through the cracks?

Where are people getting stuck or spending too much time?

Only then should you identify where AI can come in to support, fill gaps, or handle tasks that are taking too much human time.

How Can Organizations Avoid the Chaos of Tool Sprawl?

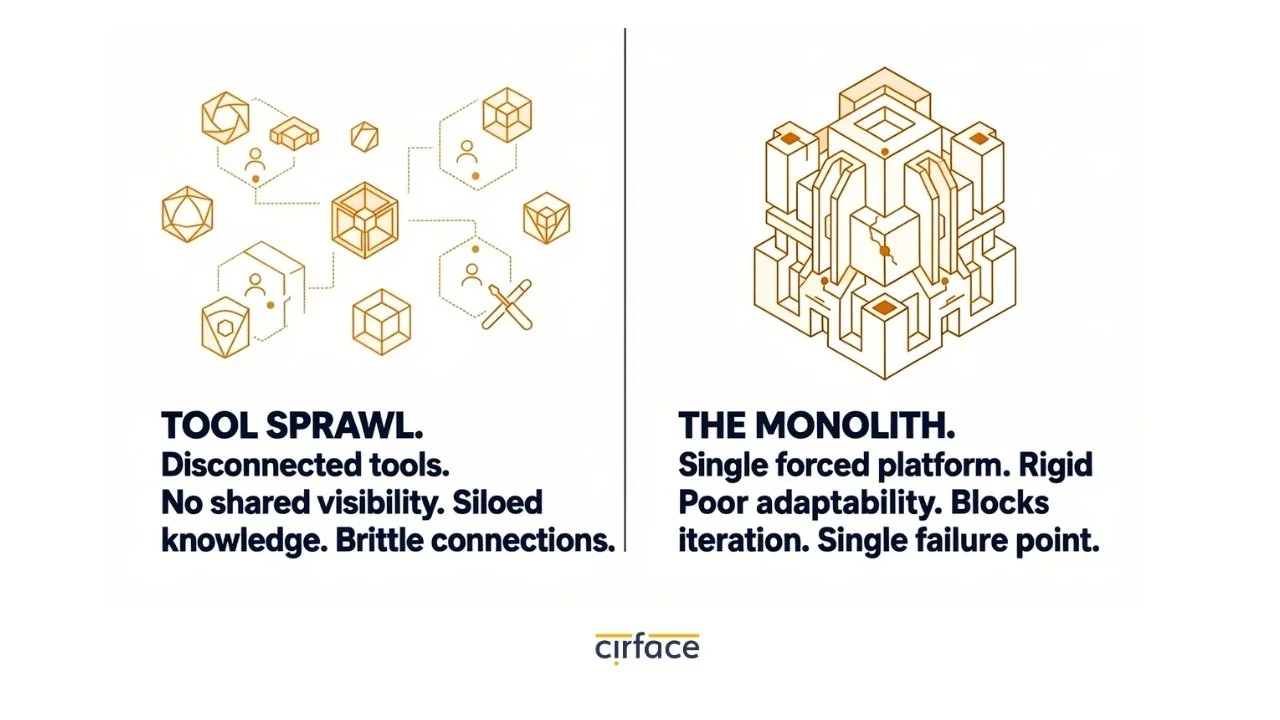

Organizations naturally tend toward chaos as software, tools, and processes accumulate over time. Two common failure modes emerge:

Tool Sprawl - People optimize their individual workflows but lose sight of the bigger picture. Everyone has their preferred tools, but nothing connects properly.

The Overcompensation - Someone decides to consolidate everything into one tool that "does it all." This creates a rigid monolith that can't adapt.

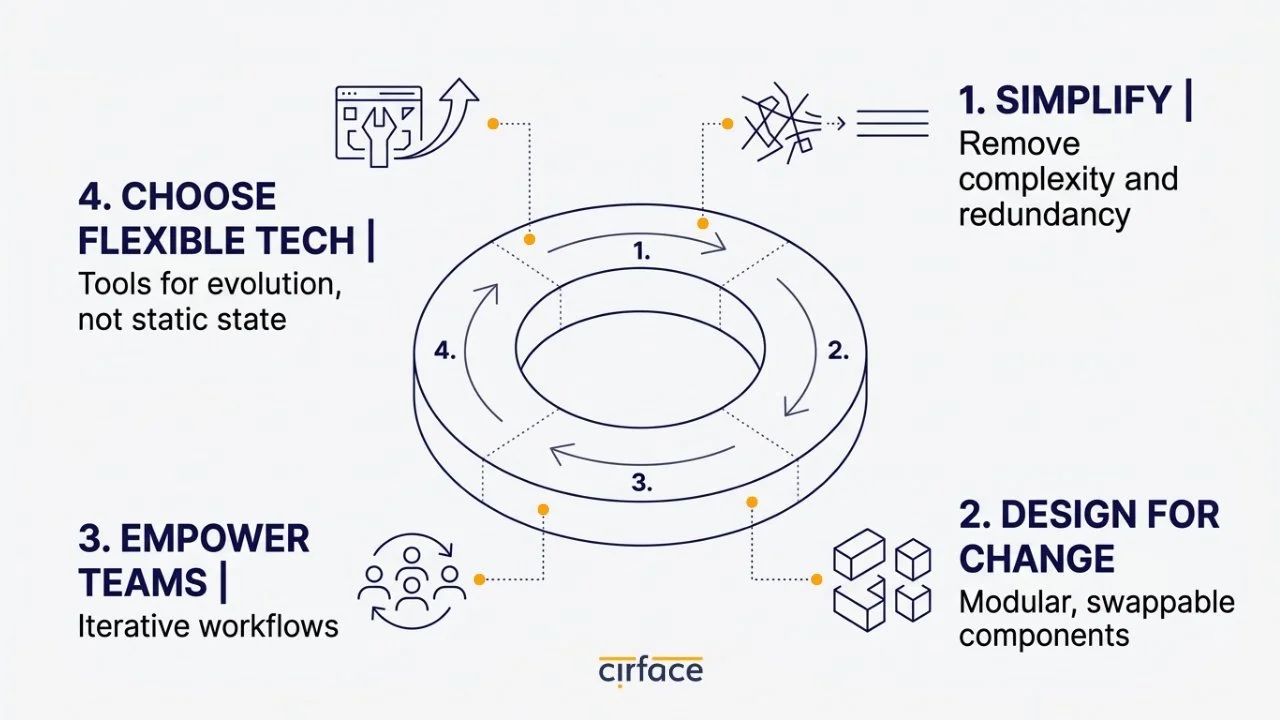

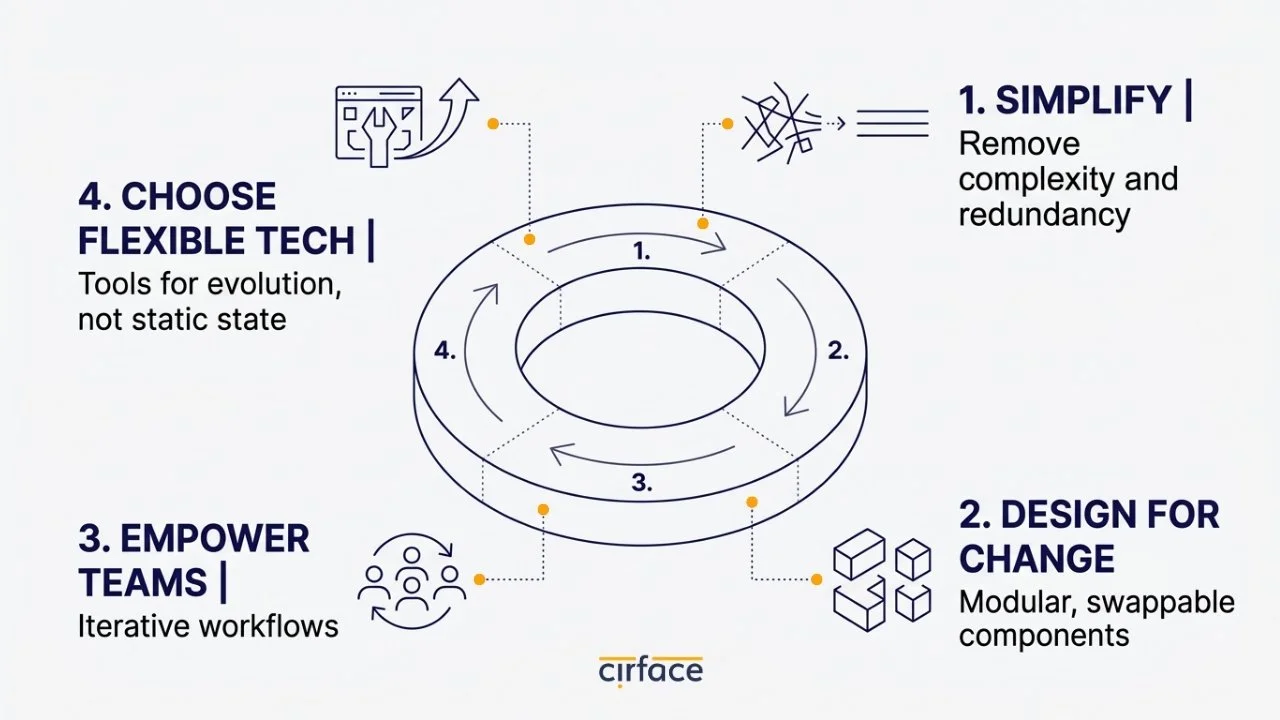

The reality is that your system will change. Things will break. People will come and go. New tools will emerge. Build for iteration, not perfection:

Assume there will be changes

Keep things simple enough to be moved and swapped

Empower people to iterate on their own workflows

Choose technologies that support flexibility

Is the Single Source of Truth a Myth?

Here's a controversial statement: the single source of truth is a myth. Every part of your organization holds a piece of the truth. The question becomes: how many systems do you need to connect to get the full picture?

Integration technology plays a crucial role here. Rather than forcing everything into one place, modern integration allows parts of that truth to live in multiple systems simultaneously. Wherever you're consuming information, it feels like the source of truth—but it doesn't only live there.

How Can We Build Trust in AI Agents?

At Cirface, we developed an AI teammate called the Sales Assistant to handle a critical part of our sales process. Here's how we built trust systematically:

Knowledge Transfer - We fed the AI teammate everything about our sales process—every document, agreement, policy, and procedure.

Structured Conversation - When the agent receives a call transcript and prospect documentation, it analyzes everything and reports back. Then we have a conversation about it, providing feedback.

Iteration and Validation - In the beginning, there was significant back-and-forth to verify the agent understood our requirements. Over time, we learned to trust it.

Team Adoption - Once the system was vetted and trained on our knowledge, we could confidently tell other sales team members: "This is our system. It's been tested. I trust it."

The timeline? About six weeks of iteration from rough initial versions to a trusted, standardized system. Now our sales team uses it daily.

Why Is Context Critical for AI Agents?

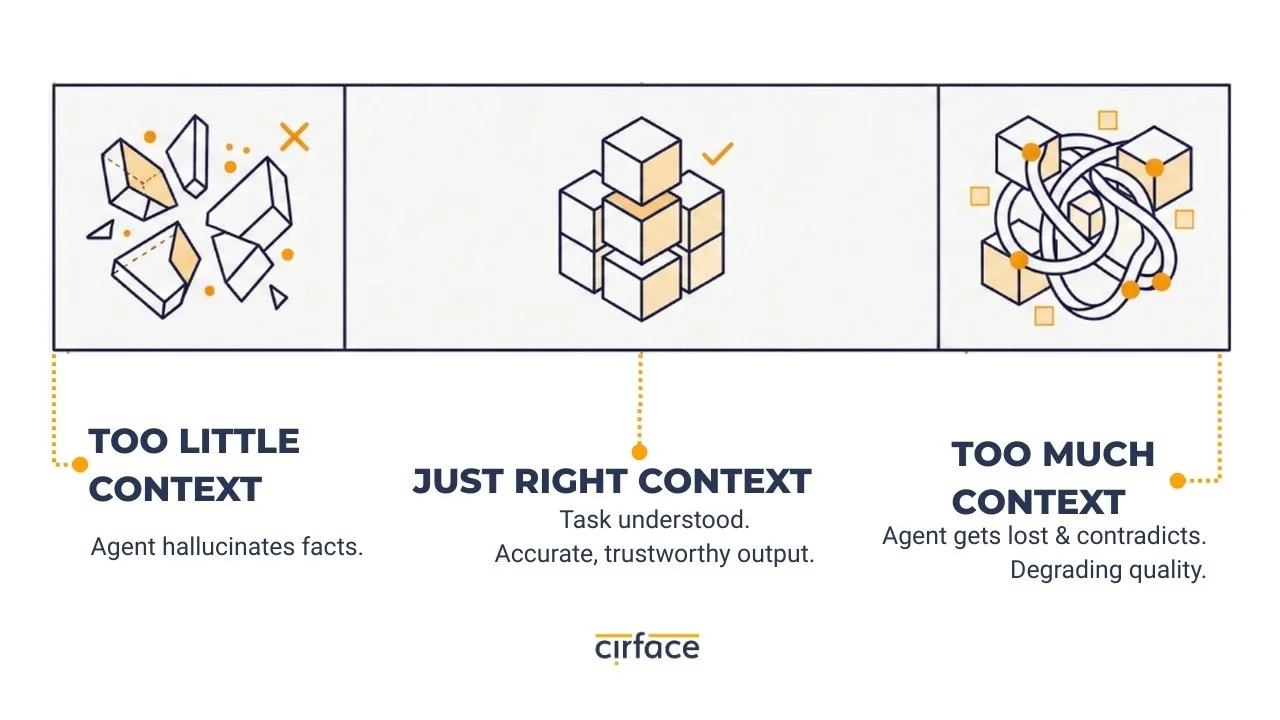

For AI agents to deliver value, they need the right context at the right time—and it has to be the right amount. Not too much. Not everything. Just what's relevant.

There's a craft to providing context to AI agents:

Too little context → they'll invent information

Too much context → they'll get lost and confused

This is one of the biggest challenges in getting intelligent systems to production-grade, customer-facing quality.

What's the Path Forward for Autonomous Systems?

Right now, most successful AI implementations operate in a semi-autonomous model with a human always in the loop checking outputs.

The key to moving toward full autonomy is continuing to:

Iterate on instructions and examples

Craft the right context carefully

Validate inputs to ensure quality outputs

Build systems that are declarative and specific about the context sandbox agents operate within

Frequently Asked Questions

How do I know if my organization needs an intelligent system?

Consider an intelligent system if you're dealing with tool sprawl, spending too much time on routine tasks, or struggling to get a full picture from your data. Start by simplifying and identifying specific gaps AI could fill.

What's the best way to introduce AI into our workflows?

Start small with a specific use case. Clearly define the context and knowledge the AI needs. Implement with a human-in-the-loop model first, then gradually increase autonomy as trust grows. Empower your team to iterate.

How long does it take to build trust in an AI system?

In Cirface's experience with the Sales Assistant, it took about 6 weeks of close iteration to go from rough drafts to a trusted system. But timelines vary based on use case complexity and risk level.

Will AI replace human judgment?

No, the goal of intelligent systems is to augment human judgment, not replace it. AI can remove friction and handle routine tasks, freeing people up for work that requires creativity, strategy, and relationship-building.

How do I avoid AI making things up?

It's critical to provide the right amount of context—not too little or too much. There's a craft to scoping the knowledge and context you give to AI. Validating outputs is also key.

What if my system or team changes?

Assume change will happen. Build your intelligent system for iteration, not perfection. Use technologies that enable flexibility so components can be adjusted as needed. Empower your team to evolve their workflows.